Not very long ago, I watched a

video by Vi Hart about $\pi$ vs. $\tau$ radicalization. The video jests, but not really, about the problems of dehumanization of differing opinions in the internet. I am not sure if this really happens with $\pi$ vs. $\tau$, which is a fake controversy (or is it?) with educational purposes. In any case, this attack of differing opinions is a real phenomenon, so I will try to tread lightly. This post is about how the $\tau$ approach, to substitute the use of $\pi$ in mathematical formulas by $\tau=2\pi$ is on the wrong track, even if it counts among its supporters fine people such as John Conway and Vi Hart. I will try to explain why and, while doing it, introduce the reader to some deep and beautiful mathematics.

In case you only want to get the gist of it and not read the post, $\pi$ is at the center of a combinatorial/geometrical generalization involving Euler's Gamma function. The new constant, $\tau,$ does not fit well in this generalization. Absorbing some of the twos in the formulas can (and should be done) by using the diameter instead of the radius, as it is a more fundamental concept.

Introduction

First of all, $\pi$ is a

mathematical constant which has been known since

ancient Greek times, although

the Chinese,

the Babilonians, and the

Egyptians, the

Indians and any ancient civilization that has studied geometry and mathematics has rediscovered it in one way or another. Some civilizations have discovered only rough aproximations, other algorithms or series which calculate the irrational number to any precision.

The number $\pi$ itself is fascinating, it is irrational, it seems to appear out of nowhere in different formulas,

may be normal¹ (it is unknown at the moment) and the quest to calculate more of its digits has pushed the science and engineering of computation forward. An example of this, is the discovery of the

fantastic Spigot algorithm.

¹A normal number is a real number whose sequence of digits is distributed uniformly in every base.

Anyway, $\pi$ was originally defined as the ratio of the circumference of a circle to its diameter (twice the radius).

So, if we have a circle of radius $r$, the circumference is $C=2\pi r$.

So what is the polemic? The $\tau$-ists propose to be done with $\pi$ and use $\tau=2\pi$ as a constant. The $\pi$-ists oppose them. Bob Palais, an ardent proponent of $\tau$, has

documented some of the history of the polemic, there is a

very famous video by Vi Hart, there is a

tau manifesto an

xkcd comic strip, and a

special page dedicated to it by Robin Whitty (theorem of the day).

First let's recap the most popular and apparently unrelated places where $\pi$ appears. We will explain them in more detail and simpler terms later.

The same constant appears also in the surface of the circle, $S_{circle}=\pi r^2$, in the volume of the sphere $V_{sphere}=\frac{4\pi r^3}{3}$, and in general in formulas of spheres in various dimensions (hyperspheres, of which the circle and the sphere are particular cases).

On an apparently unrelated issue, the same constant appears in

Euler's identity,

$e^{i\pi} + 1 = 0$, where $e$

is the natural base of the logarithm or Napier's constant, $i$ is the

imaginary unit (the positive square root of -1 so $i=+\sqrt{-1}$).

Of course, Euler's identity is very related to the circle, because of

Euler's formula, $$e^{ix}=\cos{(x)} + i \sin{(x)},$$ where $e$ is again Napier's constant, $i$ the imaginary unit and $\sin(x)$ and $\cos(x)$ are the trigonometric funcions

sine and

cosine with the argument in

radians.

Radians is a measure of angle, which is the length of the arch or, in other words, the part of the circumference spanned by the angle being measured of the unit circle (of radius $1$).

The appearance of $\pi$ in Euler's identity is related to the

complex numbers being a two dimensional space and a

Clifford algebra. I will not get into detail here but $\pi$ is there because of circles and hyperspheres and their relation with

rotations and

rotors. This also explains the appearance of $\pi$ in

Cauchy's integral theorem,

Gauss and

Stokes' theorem and its various generalizations for

differential forms and

exterior derivatives. This means that if we understand circles, there is not much new in terms of $\pi$ in all these cases.

The same constant appears again when dealing with the

Gamma function, a natural continuous generalization of the

factorial, $n!=n(n-1)(n-2)\cdots2\cdot1$, which we will explain later. For real numbers, the Gamma function is given by the integral

$$\Gamma(z)=\int_0^\infty x^{z-1}e^{-x}\, dx.$$

It is easy to prove that (integration by parts and induction) that when $n>0$ is a positive integer $\Gamma(n)=(n-1)!$.

Our constant appears in the formula $\Gamma(\frac{1}{2})=\sqrt{\pi}$. Because of the properties of the Gamma function, at every half integer $n>0$ its value is related to $\pi$ by the two formulas

$$\Gamma\Bigg(\frac{1}{2}+n\Bigg)=\frac{(2n)!}{4^n n!}\sqrt{\pi}$$ and

$$\Gamma\Bigg(\frac{1}{2}-n\Bigg)=\frac{(-4)^n n!}{(2n)!}\sqrt{\pi}.$$

Getting rid of those pesky twos

The proponents of $\tau$ have their hearts in the right place. Their idea is that a lot of the circle formulas have a $2$ which could be absorbed by the circle constant $\tau$. In this way, radians go from $0$ to $\tau$, Eulers identity becomes (by squaring) $e^{i\tau} = 1$ and most of the everyday uses of $\pi$ have one less constant. Other than the complaint that the letter $\tau$ is already taken in other fields or the appearance of $\pi$ without a $2$ in a different family of formulas, the interesting part of the controversy as it is now can be summarized as the side with more formulas should win the argument. As an example, the

Pi manifesto looks like a mirror reflection of the

Tau manifesto in this regard. There is also the argument of it being an unclear win which means a better strategy would be to keep the status quo, which is the path of less effort. Everyone knows already what $\pi$ stands for.

I have what I believe is a qualitatively different argument to why $\pi$ is better, that of naturality in the definition. I am not using naturality here as in

Cathegory theory, but the concept is similar. To avoid confusion, I will call this property

platonicity, which is a made up word I use to describe the essence of platonic objects, mathematical ideal objects. We have to try to maximize platonicity in the definition of the constant. When I say

a definition of a constant has platonicity I mean that the definition respects the the internal structure of the formula and does not obstruct its generalization. The mathematical object (platonic object) approximates a concept which is useful as a building block for other concepts, because it is closer to being discovered than invented. This is, of course, a view based on

mathematical platonism, which is, in any case, at the heart of many of the arguments already present.

While $\tau$-ists (and other fellow $\pi$-ists) argue in a sense from platonicity, they only characterize it as having less constants for the greatest number of formulas. I will rather argue that the important issue to consider is how formulas and concepts evolve as they are generalized.

So why is $\pi$ special? How do we generalize it?

There are two fundamental concepts from which $\pi$ emerges. Both concepts merge, once we generalize enough, and both are important to understand the matter at hand.

Geometrical $\pi$

As we saw, the ancient definition of $\pi$ comes from the circumference of a circle. It is a geometrical concept, and there are two ways I know to generalize it. First of all, as in all things

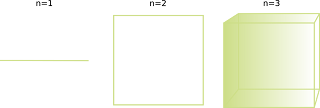

geometrical, by adding dimensions. So, we start with a circle. A circle is the set of points at the same distance of a special point called center. This generalizes easily to any number of dimensions. In 3D it is a sphere, and in more dimensions, it is the more difficult to see hypersphere.

A circle is defined by the equation $$\sqrt{x^2+y^2}=r.$$

For a sphere $$\sqrt{x^2+y^2+z^2}=r.$$

The generalization to all dimensions is clear, $$\sqrt{x_1^2+x_2^2+x_3^2\cdots+x_n^2}=r$$ where $n$ is the number of dimensions. This can be written more succintly as $$\sqrt{\sum_1^n x_i^2}=r.$$

The left side, the square root of the sum of squares, $$\sqrt{\sum_1^n x_i^2}$$ is used to define a distance or a length in the space we are working. This distance is called euclidean distance.

With this definitions, we can calculate the generalization of the formula $C=2\pi R$.

but it concerns in any case integrals and induction. Follow the links for all the gory details.

The important thing is that the area of a hyperball is:

$$S_n(r) = (2\pi r)V_n(r) = (2\pi r)\frac{\pi ^\frac{n}{2}}{\Gamma{\Big(\frac{n}{2}+1\Big)}}r^n.$$

The Euler Gamma appears again, we can rewrite the whole formula in terms of it only or get rid of it with the formula we gave above. Let's start with the second path. The surface of a sphere is actually the derivative of the volume which gives the formula above. The volume formula is simpler and more fundamental, we will work with that. The resulting formula after the substitution of Gamma with its expansion for half-integer numbers, $\Gamma\Bigg(\frac{1}{2}+n\Bigg)=\frac{(2n)!}{4^n n!}\sqrt{\pi},$ is

$$V_n(r)=(4r)^n\frac{\pi^{\frac{n}{2}-1}n!}{(2n)!}.$$

It is very difficult to find a pattern, but one thing is clear, changing $\pi$ for $\tau$ will not make it better. Following the other path we spoke about, which is getting rid of $\pi$ for its Euler gamma representation using the formula we showed before, we get

$$V_n(r)=\frac{\Big(2r\,\Gamma{\Big(\frac{1}{2}+1\Big)}\Big)^n}{\Gamma{\Big(\frac{n}{2}+1\Big)}}.$$

This makes the pattern much clearer and points to the twos we wanted to get rid of as belonging to the radius. So, there are two questions we should ask ourselves now. What is the Gamma function fundamentally? and why is it so important for spheres? Lets explore the Gamma function a little more deeply.

Euler's Gamma

So, what is this Euler's Gamma function we keep bumping into? One interesting way to find new things in mathematics is to generalize something discrete (like the integer numbers) into something continuous (like the real numbers). Continuous things are, some times, easier to work with, because of calculus (integrals and derivatives), and because limits exist.

Many years ago,

Euler, a genius which has been called the prince of mathematicians, asked himself the following question: How do we find a continuous generalization of the factorial?

Later, some other mathematicians asked how many of these generalizations are there?

So, we want to "fill in the gaps" as it where, between the integer numbers. This is the plot

for the factorial:

And we want to find its value in the middle (the red line):

Turns out that if we ask for the function to have the minimum properties one could ask for,

only Euler's Gamma Function can satisfy them. In this sense, there is a unique generalization of the factorial, which is amazing! Euler's Gamma is the continuous version of the factorial. This is the function that Euler discovered precisely.

What are these properties we would like the factorial to have?.

Of course, we want that ,for positive arguments, the function evaluated is positive. We also want the function to be continuous so we can calculate limits and so on, which the point of all this exercise; there should be no gaps.

There is a convention that the $0!=1$. There are

combinatoric reasons for this. And this lets us set up the basis for an induction definition of the factorial from which we generalize to our function.

We can define the factorial by induction as

$$(n+1)! =\begin{cases}1, &: n=0\\

(n+1)n!, &: n>0\end{cases}.$$

We can use this as a property for our function. This means that our function should satisfy

$$f(x) =\begin{cases}1, &: x=0\\

(x+1)f(x), &: x>0\end{cases}.$$

With integer numbers this is enough, but continuous real functions have more degrees of freedom, so we will need some extra properties (just one). What is the problem?

The problem is that, while the values are fixed for the integer numbers, outside of that, the may fluctuate. We have to set up conditions so that this does not happen.

So, intuitively we would like the function to not oscillate unnecessarily. This would make no sense:

The technical term for the function to be curved belly down and not have any additional humps

is to say it is a

convex function.

The factorial is multiplicative and grows very fast, so it is very common to take its logarithm. The logarithm converts multiplication to addition, so $\log(n!) = \log(n)+\log(n-1)+\cdots+\log(2)$. This way the values are smaller and we can add them together to obtain the factorial. Calculation and reasoning with logarithmic values is easier. Taking the logarithm of the value of a function straightens its graph, but if we look at the discrete plot for the factorial, it still has a curved belly, so it is convex. As a consequence, we would like the plot of the log of the Gamma function to still be convex (we say the Gamma is logarithmically convex). And that is it. There are no other continuous real positive functions which behave like a generalized factorial (the functional equation above) and which are logarithmically convex. This is the

Bohr-Mollerup theorem. A simple proof can be found

here.

This is why the Gamma function is so important. Once defined for positive real numbers (Euler's original definition as a limit), it can be generalized to negative numbers using an integral (Euler again) and then by

analytic continuation extended to all complex numbers. All this generalizations are unique, only one function fits the bill.

I am glossing over the details, but there are proofs in the links.

All this is to say that Euler's Gamma Function is not some random integral, but the continuous version of the factorial, and that this relation with $\pi$ deserves further scrutiny.

Other distances

We have already seen that the hyperspheres are defined as the set of points at a constant distance of another one we call center. We used the euclidean distance, which comes fundamentally from the Pythagoras theorem (generalized). This distance is the square root of the squares of the coordinates of the point (if the center is the origin). We wrote this as $$d(\{0, 0\}, \{x,y\})=\sqrt{\sum_1^n x_i^2}$$ or in two dimensions as $$d(\{0, 0\}, \{x,y\})=\sqrt{x^2+y^2}.$$ Lets play in two dimensions and we can generalize easily later.

The square root is really having $\frac{1}{2}$ as exponent, so we can also write the distance as

$$d(\{0, 0\}, \{x,y\})=(x^2+y^2)^{\frac{1}{2}}.$$ If we where to change the definition of distance, we would change the value of $\pi$, even if we keep everything else the same. To define a distance, (the technical term in mathematics is a metric), and keep ourselves sane, we would have to make it behave properly by making it satisfy some conditions. Any sane metric will be greater than zero, only be different from zero if the ends of the segment we are measuring are not the same and the distance of $a$ to $b$ has to be the same as the distance from $b$ to $a$ (symmetry) and going through a third point must take at least as long as going directly (

triangle inequality). These properties define a

metric, but unlike with the Gamma function, there are many metrics to choose from. Each will define

a value of pi. There is a family of metrics which is a very simple generalization of the Euclidean metric, the metric which comes from the

p-norm. A metric is the distance between two points and a

norm is the length of a vector, so they are technically related. From any norm there is

an induced metric where you get the vector from the origin to the destination by subtracting both vectors and then measure it.

If one of the points is the origin, our metric (distance) will be defined as $$d(\{0, 0\}, \{x,y\})=(|x|^p+|y|^p)^{\frac{1}{p}}.$$ This is normally written for any two vectors as $$||\overline{v}-\overline{w}||_p=(|x_v-x_w|^p+|y_v-y_w|^p)^{\frac{1}{p}}$$ where the two lines $||$ represent the norm.

This family of metrics (one for each value of $p$, with $p=2$ being the Euclidean or regular everyday distance), is of particular interest because it generalizes the Euclidean metric while at the same time gets rids of the twos which come from using this particular distance, so it will illustrate us as to which constants come from where. Also when $p=1$ and when $p\rightarrow \infty$ are of particular interest (when $0<p<1$ the definition does not preserve the last property, so it is not a metric).

In two dimensions what do these distances look like? Well, say $p=1$, then $$d(v, w)=||v-w||_1=(|x_v-x_w|+|y_v-y_w|).$$ This is called the

taxicab distance. If you divide the space into little squares (like the streets in a roman grid, say, for example Manhattan). The number of blocks it takes to get to a place is the taxicab distance.

Examples of three paths of different taxicab length

In our case, where the space is continuous, you can think of these squares being infinitesimally small. What is a circle for this distance? a square with the vertices in the axis.

Taxicab circle of $r=3$ with one path marked in red

Taxicab circle for in continuous space (when the block size tends to 0)

What happens on the other edge case, in the limit where $p\rightarrow\infty$?

In that case, the after the limit, we obtain $$||\overline{v}-\overline{w}||_\infty=max(|x_v-x_w|,|y_v-y_w|).$$ In that case, as a circle you get a square again, but with edges parallel to the axis. Intermediate values of $p$ are in between, with the middle being the circle.

What is the value of pi in these cases? Is pi somehow special? When defining pi for these spaces, one have to be careful to use the distance consistently to measure both the circumference and the radius. These concepts are explained very well at an introductory level

in this video. $\pi_2=3.14\ldots$ is definitely special, because it is the

minimum value of generalized $\pi$

for this family of distances, which is called $\pi_p$. The maximum is attained in for $p=1$ and $p=\infty$ and is $\pi_1=4$ and $\pi_\infty=4$. Things like the radians and trigonometric functions

can be generalized successfuly to this setting.

These formulas are easily generalizable to more dimensions. What are the hyperspheres in that case?

Lets call them by their name, $n$-balls of norm $p$, where the $n$ is the dimension and $p$ is the $p$ in the formula for the norm. Well, for three dimensions, $n=3$, when $p=1$ the ball is an octahedron and when $p\rightarrow\infty$ one gets a cube. The three circles can be seen (cut open) in this picture:

Hyperballs in 3 dimensions

In other words, if we are in three dimensions, $||\overline{v}||_1=R$ is an octahedron. The generalization of a cube to more dimensions is called an hypecube or a polytope, which is precisely the family of $n$-balls of norm $p=\infty.$ The generalization of the octahedron is the hyperoctahedron or crosspolytope, which is the family we get for $p=1$.

Hypercubes (polytopes) for different dimensions

Hyperoctahedra (crosspolytopes) for different dimensions

The volume inside an $n$-ball of radius $R$ of norm $p$, that is the volume of the set of points contained in $||\overline{v}||_p\le R$ is

$$V_n^p(r)=\frac{\Big(2r\,\Gamma{\Big(\frac{1}{p}+1\Big)}\Big)^n}{\Gamma{\Big(\frac{n}{p}+1\Big)}}.$$

We see now that $\pi$ comes clearly from the Gamma function, and $\Gamma(\frac{1}{2})=\sqrt{\pi}$ is quite fundamental when thinking about $\pi$, and there is no $2$ in that part of the formula. The only remaining twos in the formula come from the radius not being fundamental and if we used the diameter, there will not be any extraneous constants and everything is cleaner.

Note that can also generalize volume (lets call it p-volume) and calculate the $n$-balls of $p$-norm generalized $p$-volumes, which we will talk about later. This requires some sophisticated mathematics. The volume used above is the standard regular volume, not derived in any way from the norm.

As an aside, the fact that polytopes and crosspolytopes are dual to each other has to do with the norms which they are hyperballs being dual which happens for two $p$ and $q$ norms if $1/p + 1/q = 1$. A theorem by Minkowski, which states that if the norms are dual, the balls are polar sets. See this paper for more details.

Combinatoric generalization

We have seen that the limits of the $p$-norm $n$-balls are polyhedra. There are many connections between

combinatorics and

polyhedra (or more generally

polytopes). Combinatorics is the branch of mathematics which counts and obtains properties of configurations of finite or discrete sets. Polyhedra are discrete sets of vertices and edges, so combinatorics is deeply entwined with properties of polyhedra. Combinatorics is also related to finite groups, probabilities, etc. In many of the formulas used in combinatorics, the Gamma function and $\pi$ appear magically, but the ideas above can give a clear path of generalization from simpler concepts, which enables understanding. To find everything about generalized polyhedra and their properties, find a copy of

''Regular Polytopes'' by Coxeter.

Both hypercubes (polytopes) and hyperoctahedra (cross-polytopes) can be defined by adding one dimension at a time and specifying which edges are connected to the edges in this new dimension.

There are actually only three families of

regular polytopes that exist for any number of dimensions, the third one being regular simplices (

hypertetrahedra or regular simplices).

For example, we start with a segment (two dimensions), and add a new dimension. If we are growing a hypercube, we duplicate the number of vertices, so we duplicate the number of vertices at each step. The square will have $4$ vertices, the cube $8$ and so on. The number of vertices for dimension $n$ will be $2^n$. For an hyperoctahedron, we always add two opposed not connected vertices, so the number of vertices for dimension $n$ will be $2n$. In the hyperoctahedron, all the pairs of non-opposite vertices are connected to one another or, in other words, each vertex has $2n-2$ edges connected to it. For the hypercube, each vertex is connected to $n$ other vertices, so the number of edges in each vertex is $n$.

Related to all this is the concept of

duality for polyhedra. The of a dual of a polyhedron has one vertex per face with edges connecting the vertices if the faces share an edge. Hypercubes and hyperoctahedron are dual of each other, so the number of faces of a hyperoctahedron is the number of vertices of the hypercube and vice versa.

So, going back to volumes, how many n-hyperoctahedron of the same radius can we fit inside an n-hypercube? This is where we can see that combinatorics come into place. Finding the number is easy using the formula we obtained before, $\frac{V_n^\infty(r)}{V_n^1(r)}=\frac{1}{n!}$, so we can fit $n!$ hyperoctaedrons in a hypercube. Lets fixed the orientation of a cube in place and number its vertex. The number of ways we can do it, considering that any two numberings which can be rotated to coincide are not the same (cube's orientation fixed in place). If the cube's orientation is not fixed in place (so two numberings are the same even if they are rotated), then the number of ways to number a cube is $\frac{(n-1)!}{2^n}$, which is the number of hyperoctahedra that can fit inside a hypercube of one less dimension and half the diameter.

In order to fill the space without leaving gaps, for many different numbers of dimensions, $n$, in we have to cut hyperoctahedra in pieces to pack them together. How much space we can fit by piling up polytopes in space is called packing density, and when its maximum is $1$, as happens, for example, trivially with hypercubes in general and with octahedra for $n=2$ and $n=4$ dimensions, there exists a tiling with no gaps. If there are gaps, we average over infinite space to obtain the density (or average in a hypercube or other region (tile) which captures the periodic pattern (tiling or tesselation) and which we know can be packed. This properties are studied by combinatorics and probability, as they are related to finite and discrete sets (vertex, tilings) and densities.

We can make the game more interesting by thinking about how many numberings of a fixed hypercube exist with some family of numbers repeated (for example, primes can be repeated). Or numberings with random numbers with some distribution. In these cases we will get (in average, or asymptotically as the number of dimensions grow or...) something between $n!$ and $1$, which we will be able to approximate with the Gamma function or the volume of a hyperball of $p$-norm. Many if not all $\pi$ and Gamma functions which appear in probability densities and combinatorics can benefit from these ideas or interpreted in terms of them.

What is Pi, fundamentally?

So, my conclusion is that $\pi$ value is fundamentally tied to the Gamma function and the euclidean distance. The resulting formulas written generally, like $\Gamma\Big(\frac{1}{2}\Big)=\sqrt{\pi}$ would not benefit conceptually or in terms of simplicity from changing $\pi$ for $\tau$, quite the opposite.

The radius should probably give

In the spirit of getting rid of unnecessary constants and maximizing platonicity, there are still some lingering twos in formulas like $$V_n^p(r)=\frac{\Big(2r\,\Gamma{\Big(\frac{1}{p}+1\Big)}\Big)^n}{\Gamma{\Big(\frac{n}{p}+1\Big)}}.$$ I think the reason is that the radius is not a fundamental concept of mathematical reality. Again, we should look at how one generalizes this concept.

I have come across this same problem several times in different places. The branch of mathematics which studies how volume measurement is generalized is called measure theory. Essentially measure theory studies how to cut the space in little pieces ($\sigma$-algebras) and assign a number to each of them them which is their generalized volume. In measure theory when generalizing Lebesgue measure to Hausdorff measure sets are measured by their diameter (to a power depending on the dimension). In convexity analysis, where one has convex bodies and needs to measure their width the diameter is the way to measure it. Even with something as simple as

generalizing a hypersphere, it is better to get rid of the radius and talk about the diameter. The diameter of any set is the distance between the two points of the set for which the distance is maximum, so it is defined generally, without any symmetry restriction, like the set having a center.

Using the diameter, rather than the radius or the side, is what gives us the most beautiful formula for hyperball of norm $p$:

$$V_n^p(d)=\frac{\Big(\Gamma{\Big(\frac{1}{p}+1\Big)}\,d\Big)^n}{\Gamma{\Big(\frac{n}{p}+1\Big)}}.$$

Interesting ideas

From all this, we have got many interesting ideas to investigate generalizations. For example, can we define a $\pi$ for constant width objects? Can we find a family of distances for the other family of regular n-dimensional polytopes, tetrahedra, which are also self-dual? (using both at the same time so that the norm is symmetric or considering asymmetric seminorms) Can we generalize the volume for other $p$ metrics, which is to find an appropiately defined measure derived from the $p$-norm? What are the

formulas for volumes of hyperballs in that case? How about $\pi$ for other norms not in the family we looked at, for example norms where the balls

are polyhedral shapes? Can we look at $\pi$ and the Gamma function in different formulas of physics and mathematics and find the ties with these concepts? What are the

packing densities of different families of balls?

These questions are beautiful and I am sure that will take us to beautiful and interesting mathematics, some already discovered, some new, some in between.

Have fun and let me know what you find!